Why doesn't my boss (or employee) make better decisions? | #4

a story about cave people, a reflection on the technology adoption curve, and a pledge to help grease the wheels

In this 4th issue of The Pole:

A story about cave people as a metaphor for the technology adoption cycle

Innovators vs Standardizers: you either die trying to convince people that your New Thing is better, or you live long enough to run into someone trying to convince you that their New Thing is better than your

NewOld ThingThe problem with people knowing things (and with not knowing things)

What I’m doing about it

—

I think we've all been there:

Not understanding a decision your boss made

Wondering why an employee won't stop trying to be a hero or won't work as a team

Struggling against annoying, inflexible bureaucracy

I have a theory that these are all the same problem. Let me explain it with a parable.

A story about cave people

Let's turn back to 100,000 BC. Let's say we're cave people.

We have a problem: we're cold.

One cavewoman, Alice, discovers how to start a fire.

Alice then tells the rest of us.

Some of us are skeptical.

Magic light that warms you? Cool story, sis.

Some curious, open minded folks try it.

It works for them, too! Thus, it gains momentum.

The skeptical folks slowly take their feet out of their mouths and gather around the flame.

Fast forward a few generations.

The climate changes, soil erodes, food and water disappear. We gotta migrate.

After much traveling, we land somewhere new. It's even colder, but at least there's food. We try to start a fire.

...it doesn't work.

But the skeptical folks are steadfast in their existing beliefs.

Keep trying! It worked before. This is the way.

After trying all day, they finally start a fire. They beam with pride, sinking further into their conviction.

Meanwhile, Alice's great grandson Bob is tinkering with ways to start the fire quicker.

But after a whole day of experimenting, he's got nothing.

The skeptical folks laugh.

Dude. This is how it's done. We have a way that works, why don't you just do what works?

Bob is disheartened, but nonetheless believes there has got to be a better way.

Fast forward a few days and a bunch of experiments... Bob hears a crisp pop.

Holy crap, it worked.

Bob gets a huge dopamine rush.

Alright, what was I doing? How did I do this again? I gotta show everyone!

He goes and gets a crowd still working on starting their fire.

The skeptical folks snarl.

You just got lucky. Let's see you do it again.

Some curious, open minded folks try it.

It works for them, too! Thus, it gains momentum.

The skeptical folks slowly take their feet out of their mouths and gather around the flame.

Innovators vs Standardizers

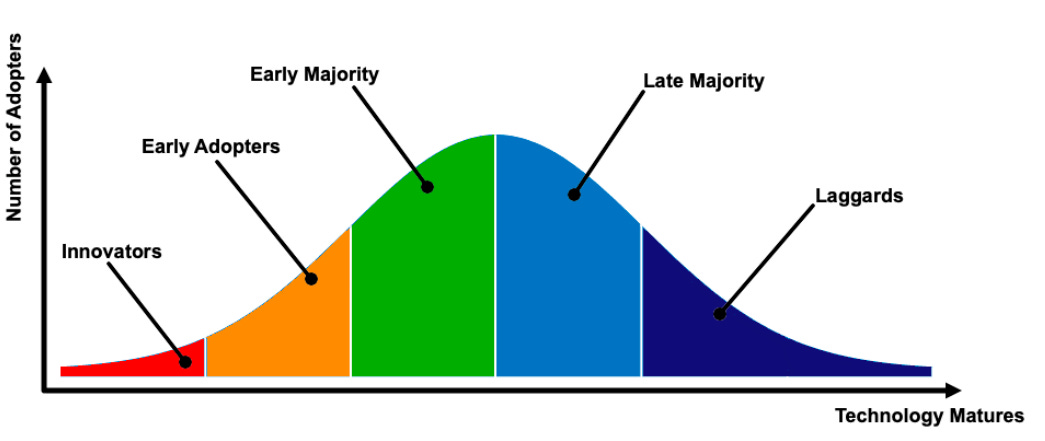

The previous parable is a metaphor for the technology adoption cycle (pictured above):

a problem becomes obvious

a few innovators (Alice and Bob) try a bunch of stuff to solve it

Let's call this strategy experiment-to-win (h/t: Rohit) - synonymous with Peter Thiel’s Zero to One idea

some stuff proves itself to work, so the community starts teaching and standardizing it.

Let's call this strategy shore up the bottom (h/t: Rohit again)

times change, desires shift, weaknesses in existing technology are exposed, new problems are created

go back to step 1

How does this relate to bosses, employees, and organizations not seeing eye-to-eye?

Well, in the absence of knowledge, experiment-to-win and shore up the bottom directly compete with each other.

To make that connection, let's talk about problem-solving in an abstract (but hopefully useful and not annoying) context.

All problems are solved using some kind of leverage. Us cave people started fires by leveraging Alice's observation.

We could leverage it because it was repeatable. If it had only worked once (or just for Alice) it would have been useless to us.

When Bob invented a quicker way to start fires, he leveraged both Alice's existing observations and his new observations.

This leveraging-both-at-once idea, that we can compose or stack models of reality, is critical to our daily lives.

We are able to drive because thousands of parts (engine, wheels, brakes, etc) are all working together in harmony.

We can use our phones because all the electronic components coordinate with each other because we can rely on the phenomenon of electricity.

We are able to live because trillions of molecules work together, billions of cells work together, and hundreds of organs work together.

Everything we do is leveraged to an unfathomable degree. We are all walking around on 1-billion-feet-tall stilts.

Which is great...

...if everything works.

Often, all it takes is one weakest link in the chain to introduce chaos into the system.

If our liver stops working, we die.

If our heart stops working, we die.

If our... you get the point.

There are strategies to combat this weakness (some combination of decentralization, redundancy, and understanding), but the bottom line is:

using leverage can be risky, so it's important to mitigate risk by modeling reality as correctly as possible

But we can’t know everything. In fact, most people don't know most things (gasp). So, we have to rely on heuristics most of the time.

How often do you sign things you barely skimmed?

Heuristics, yeah baby.

One particularly delightful heuristic is using amount of time an idea has remained in collective conscious as a proxy for how reliable it is. Theoretically,

→ the older the idea,

→ the wider the space of scenarios it has been tested under (and withstood),

→ the sturdier the idea,

→ the more we can trust it.

This is called the Lindy effect.

It’s a great heuristic for the individual, but the more people use it, the more it sucks for society. In fact, the more individuals use heuristics, the more it sucks for society.

Here’s why:

We know that we don’t know things.

We also know that we need to know things in order to solve problems AND, more importantly, to not cause more problems.

What’s the best course of action to take if you don’t know anything?

Do what works.

Be slow to let go of old things that have worked for a long time.

Be slow to adopt new things that have only worked for a short time.

Be risk-averse.

But what happens when everyone does that?

Nothing changes. Problems remain unsolved.

The problem with people not knowing things

Fortunately, a lot of people do take risks! Especially the folks doing the tinkering or the folks on the front lines.

These knowledgeable folks don't need to rely on heuristics. They know how things work. What looks like a risk to everyone else can be a solid investment to them.

When one party is relying on heuristics and the other on experience, that creates an information asymmetry, which makes it harder to agree on what the optimal decision is.

Maybe you're an employee (e.g. Alice, Bob) and you've figured out a better way to do things (experiment-to-win).

Or maybe you're the boss (e.g. the skeptical folks) and you wish your employee would quit being stubborn and taking risky, non-standardized approaches (shore up the bottom).

Who's right? As long as both parties know different things and have different levels of confidence about that knowledge... Only time will tell.

What I’m doing about it

If you're like me, you find this incredibly frustrating. Although we will never collapse completely the Venn diagram of things each of us knows, I still think we can do better at sharing knowledge.

Teaching well is hard, but doable. Fortunately, I love doing it and thinking about how to do it better. That's where I'm carving out a niche: learning to spread knowledge in a way that minimizes extraneous cognitive load and maximizes enjoyment.

The future is here, it’s just unevenly distributed. Let’s grease those wheels.